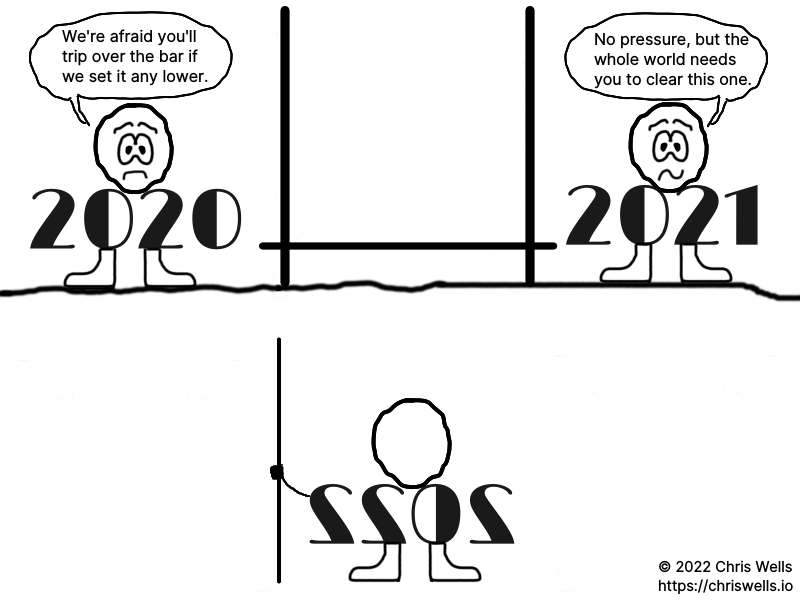

It's a really low bar for 2022.

I don't think I'm alone when I say that 2020 and 2021 have set some low expectations. However high or low you're setting the bar for 2022, I hope you clear it.

]]>

It's a really low bar for 2022.

I don't think I'm alone when I say that 2020 and 2021 have set some low expectations. However high or low you're setting the bar for 2022, I hope you clear it.

]]>

jq can be used to query NPM package data

If you follow IT security or even general IT news, you've likely read about the recent supply chain attacks against multiple widely used NPM packages. First, we saw a compromise of UAParser.js (ua-parser-js). That was followed by back-to-back hijacks of the Command-Option-Argument (coa) and rc packages. The affected versions of each package are well documented:

However, a developer might have dozens of projects on their system with each project having hundreds or even thousands of dependencies once you include child dependencies. How can you determine what NPM packages and versions are installed across an entire system to determine whether your system is at risk?

Every NPM package includes a package.json file containing data such as the package name, version, license, and dependencies. It's easy to search for all package.json files to find the installed NPM packages, but parsing the data can be a bit trickier. That's where jq, a command-line JSON processor, comes to the rescue.

Start by installing jq. On FreeBSD, it's as simple as running pkg install jq. Once you have jq installed, run the following command and wait patiently (it takes about 15 minutes on my system with over 28,000 packages):

sudo find / -type f -name 'package\.json' -exec \

jq '. | {

name: .name,

version: .version,

deprecated: .deprecated,

license: (if (.license | type) == "array" then (.license | map(try .type // .) | join(", ")) else (try .license.type // .license) end),

description: .description,

packageFile: "{}"

}' {} \; \

> npm-packages.json 2>/dev/nullWhat is this command doing? We start by using find to search for files named package.json. The -exec parameter passes each result to jq, which accepts a string telling it how to process the JSON in the file. The example above converts it into a new JSON object with the following properties: name, version, deprecated, license, description, and packageFile. The value of each property is set by referencing the properties in package.json using dot notation: .name, .version, etc. Since .license can be one or an array of objects/strings, there is special handling to include all licenses and to use .license.type when present or just the .license string otherwise. Note the one outlier is packageFile whose value is set to "{}": this is simply the path to the file as provided by the find command enclosed in quotes. Finally, we redirect stdout to a file named npm-packages.json and stderr to /dev/null. The npm-packages.json file will contain one of our JSON objects for every package.json file that was found.

Now that you have a list of all your locally installed NPM packages in a single JSON file, you can use jq to quickly run any queries you want. Use the following query to convert that file to a more human-friendly CSV format:

jq -s -r '["Name", "Version", "Deprecated", "License", "Description", "Package File"],

(. | sort_by(.name, .version, .packageFile)[] | map(.))

| @csv' \

npm-packages.json > npm-packages.csvThe -s and -r parameters tell jq to "slurp" the file as one big input stream converted to an array. The first array defines what will be the column headings in our CSV file. Then the input is piped through sort_by() to make the file easier to browse. Finally, we redirect the output to a file named npm-packages.csv that can be opened with LibreOffice Calc, Excel, or any other tool that supports CSV files. Note that bundled submodules don't always have a name or version, so there could be a LOT of rows at the top of the CSV file with empty values.

Couldn't you do all this with a single command using another pipe? Certainly, but this approach provides the option to perform additional extremely fast queries against the JSON file or to process the CSV using other tools depending on your needs. At this point, you could open npm-packages.csv in a spreadsheet editor and skim for the compromised package versions (easy since the CSV file is sorted by name and then version). Let's play with some example queries instead.

Find all installed versions (not just vulnerable versions) of the compromised packages sorted by name, version, and path to see if you use them at all:

jq -s -r '. |

map(select(.name == "coa", .name == "rc", .name == "ua-parser-js")) |

map({ name: .name, version: .version, packageFile: .packageFile }) |

sort_by(.name, .version, .packageFile)' npm-packages.jsonCheck for a compromised version of each of the 3 packages:

jq -s -r '. |

map(select(.name == "coa" and

(.version == "2.0.3", .version == "2.0.4", .version == "2.1.1", .version == "2.1.3", .version == "3.0.1", .version == "3.1.3"))) |

sort_by(.name, .version, .packageFile)' npm-packages.json

jq -s -r '. |

map(select(.name == "rc" and (.version == "1.2.9", .version == "1.3.9", .version == "2.3.9"))) |

sort_by(.name, .version, .packageFile)' npm-packages.json

jq -s -r '. |

map(select(.name == "ua-parser-js" and (.version == "0.7.29", .version == "0.8.0", .version == "1.0.0"))) |

sort_by(.name, .version, .packageFile)' npm-packages.jsonYou want to see an empty array returned for each of those commands—anything else means you have a problem on your hands. To be sure the query is running correctly, copy an installed version from the output of the example above and paste it into the command that checks for vulnerable versions. When doing that, you should see the installed version in the results.

It's worth pointing out that an empty array doesn't necessary mean you were never compromised: if you upgraded packages after installing a compromised version, you could have been impacted but no longer have it installed. In that case, refer to the security advisories linked above or the project issues on GitHub for help. These instructions are meant to help users check their systems at the time of a vulnerability disclosure and before upgrading packages.

These are just 3 of the latest popular packages to be compromised, and we can expect more in the future. When it happens, generate a new JSON file and adjust the queries above to check for the vulnerable versions.

]]>In today's world, computers are more than just the machines under our desks. They're everywhere—in our phones, cars, and refrigerators—and those devices all run software. In this post, we're talking about the computers in your phone and your light bulbs as much as we're talking about the one on your lap. Source code (sometimes just source) is code written by a programmer to tell a device or app what to do and how to behave. If the source code is open, that means it's available for anyone to see and modify; if it's closed, then it's kept secret from anyone who doesn't work on it. That's where we get the phrase open source software.

With that explained, let's take a look at "What is Open Source Software?" by Brian Daigle:

"What is Open Source Software?" by Brian Daigle

Then move straight into "Open Source Basics" by Intel Software for a great analogy that shows how open source works:

"Open Source Basics" by Intel Software

Now that you understand what open source means and how open source projects work, let's delve into what entices developers to build open source software and the impact it's had.

Why would anyone give away something they worked hard on? Many open source contributors earn a very healthy living for the same kind of work that they donate in their spare time. People who choose to do that tend to be passionate about software in general or the specific projects they're working on—the kind of people you can expect to do great work. Obviously the motivation for donating your time is personal, but there are several common themes. Many do it for fun as much as any other reason. It provides a creative outlet for people who enjoy solving problems with software. To a developer, getting positive feedback from users is like an author hearing from readers who enjoyed their book; finding out other developers use your software is like discovering that other authors enjoyed your book. If people decide to contribute to your project, then you reap the rewards of their work in addition to their ideas. It's a collaborative world of give and take.

Why does it matter that you can access an app's source code? If you're using an app that has a glitch or is missing a feature you really want, it's truly empowering to be able to submit a patch that scratches your itch. When developers have access to source code, not only can they fix bugs and add features, but they can examine the code to see exactly how it works and what it does. That enables them to learn from each other while also inviting scrutiny. Does an app make your device vulnerable to hackers? Does it send your personal data to Microsoft and/or the NSA? You don't need to trust the company that makes it when you can see for yourself exactly what's going on behind the scenes. Developing in the open increases transparency and builds trust, which is conspicuously lacking with many of the big tech companies. Often, open source software springs from a desire to give users an alternative to those untrustworthy sources.

The Open Source Initiative raises awareness and adoption of open source software.

Another tremendous draw is the community. Being part of the broader open source community creates a connection with tens of millions of developers and numerous more users. When you're a contributor to a project, you share a much greater feeling of ownership and belonging with other members and users of that project. Of course, there are blogs, podcasts, forums, and chat rooms as well as conferences where like-minded people share their passion for open source and bond over their experiences in that world. Since open source doesn't mean perfect (did I mention bugs?), there are times when things don't go the way you want. That's when the community really matters. A couple of months ago, I had a problem with my file manager after a software update. I dropped into a chat room where the team who works on that app hangs out and asked for help. In exactly 2 hours and 1 minute, someone had found the cause and published a fix. It took another 9 minutes for me to pull the patch down and rebuild the app: problem solved. Bug fixes aren't usually that fast, but that level of response would have been impossible if I used the closed source Windows Explorer instead.

Finally, a common motivator for open source developers is a desire to give back. We've stood on the shoulders of giants for decades by reaping the rewards of open source, so contributing allows us to pay it forward. We're all working together to solve problems and make better software not just for ourselves but the entire world. The only efforts of this magnitude in all of history have been wars, but the open source community has been mostly self-mobilizing. Many open source advocates take a philosophical stance that all software should be open as a matter of principle to improve society. This is often referred to as "free as in beer vs. free as in freedom" (i.e., price vs. liberty): some people simply don't want to pay for software (like getting free beer) while others want the source code to always have the freedom to evolve and benefit the community.

Using Intel's recipe example, let's say that grandma had 5 children who each had 5 children who in turn had 5 children of their own. Now she's a great-grandma with 155 people plus herself using that recipe or a version of it. Most of them keep in touch and share changes that improve it. Eventually, those are going to be some really great cookies—even grandma would be impressed. The same was true for software: over time, with enough contributors and iterations, open source software began to take the lead against proprietary software. Companies and users found open source tools that out-shined closed source tools, and it made sense to choose apps with most of the features they wanted while allowing them to add more. They started making contributions and donating money to open source projects they depended on. That had a snowball effect where the community of open source developers, apps, and users grew rapidly. The amount of code increased as it became easier to collaborate over the Internet, and these projects each represented a solution that anyone could use in whole or in part.

Imagine if a fantastic restaurant posted their recipes on the Internet for anyone to use—including other restaurants. What if most of the best restaurants posted their recipes on the Internet? Home cooked meals would level up a notch or ten despite whether you had to swap out some ingredients to make a recipe perfect. That's the impact open source has on creating software. Today, the vast majority of software projects use open source building blocks to avoid reinventing the wheel for every little task. A major benefit of using many of the same tools is that it increases compatibility. When a large portion of developers choose the same building blocks, you begin to find those tools everywhere. Starting with familiar tools makes it faster and easier to build new things. Open source is the number one reason we're able to have fancy things like affordable software for our phones and cars.

To get a feel for the prevalence of open source software and the level of collaboration involved, sit back and enjoy "What the Tech Industry Has Learned from Linus Torvalds," a TEDx talk by Jim Zemlin discussing the most widely used open source project in the world:

"What the Tech Industry Has Learned from Linus Torvalds" by Jim Zemlin

At this point, open source is nothing short of ubiquitous. The largest site for open source collaboration, GitHub, has over 65 million users and is home to over 200 million projects as of August 2021. That's 65 million people collaborating to build open source software on just one site. Because of all the freely-shared solutions available, it's easier to build software today than ever. The sheer volume and quality of open source projects empowers developers around the globe and beyond: NASA revealed that their Ingenuity helicopter on Mars is powered by Linux, and they open sourced the code they wrote to fly it. Open source is to software what blogging was to traditional publishing (as mentioned in the first video, WordPress is an open source blogging platform that powers 42% of all websites). Open source developers tore down the castle walls built by the early software giants like Microsoft and gave a voice to the masses.

The biggest lesson from the open source movement is that cooperation, collaboration, and open competition are superior to closed competition with few participants and even fewer gatekeepers. We're still competing to build the best solutions, but we're doing it in the open where anyone can contribute. It's democratization of software by inviting everyone to participate while allowing users to pick the winners. At the same time, it's socialization since everyone in the world reaps the rewards. The only barrier to access or participation is having a device to run the software, which gets cheaper with every passing year. Who owns all this software? None of us; yet I do, you do, we all do. It's the great equalizer and enabler of the 21st century. The people have taken stewardship of the most valuable intellectual property in the world, and they're using it to build a future where we all benefit.

]]>Somehow it seems like good timing. You're welcome, America.

]]>

Happy querying!

After deploying a Helm chart into Kubernetes, it's easy to query the resources using kubectl with JSONPath expressions. That's not always an option, though. I recently needed to calculate the total CPU, memory, and storage allocated for a production sized deployment that wasn't deployed yet, but I didn't have a non-production environment large enough to deploy and then query. Wouldn't it be nice if you could query Helm charts using JSON?

The solution is to chain these 3 steps together:

helm template to produce the YAML for the resources.Start by installing jq. On FreeBSD, it's as simple as running pkg install jq.

Next, create an alias to convert YAML documents into JSON. I'm not taking credit for this code snippet—it's pretty much what you'll find all over the web if you search for ways to convert YAML to JSON—although I had to tweak it to make it work for me. Note that the alias is named yamls2json (plural) because it expects multiple YAML documents as input, which is what helm template provides. Copy this snippet into your ~/.bashrc file:

alias yamls2json="python2 -c 'import json, sys, yaml;

from yaml import Loader as sl;

docs = list(yaml.load_all(sys.stdin.read(), Loader=sl));

print "\""["\"";

for i in range(len(docs)):

json.dump(docs[i], sys.stdout);

if i != len(docs) - 1:

print "\"",\\n "\"";

print "\"\\n"]\\n"\"";

'"Then source the file so the alias is available in your current shell: . ~/.bashrc

Now you can run queries against Helm charts without deploying (and even specify one or more values files):

helm template my-blog bitnami/wordpress -f dev-sizing.yaml | yamls2json | jq -r '. | map(select(.spec.template.spec.containers != null))[] | .metadata.name as $pod | .spec.template.spec.containers[] | [ $pod, .name, (.resources.limits.cpu | tostring), (.resources.requests.cpu | tostring), .resources.limits.memory, .resources.requests.memory ] | @csv'

"my-blog-wordpress","wordpress","null","300m",,"512Mi"

"my-blog-mariadb","mariadb","null","null",,The output can be redirected to a CSV file with the following columns:

To query persistent volume claims, use the following:

helm template my-blog bitnami/wordpress -f dev-sizing.yaml | yamls2json | jq -r '. | map(select(.spec.volumeClaimTemplates != null))[] | .metadata.name as $pod | .spec.volumeClaimTemplates[] | [ $pod, .metadata.name, .spec.resources.requests.storage ] | @csv'

"my-blog-mariadb","data","8Gi"Output columns are:

This is a very simple example, but I've found this little trick can save a lot of time when working with charts that have many subcharts and a lot of deployed resources. It also makes it much easier to compare sizing of different environments with multiple deployment options enabled or disabled.

Of course, you can query anything you want from the resources—it's not limited to sizing. Output the raw JSON to find the fields you want to query:

helm template my-blog bitnami/wordpress -f dev-sizing.yaml | yamls2json | jq '.'Use the examples above to get started writing your own JSON queries for Helm charts.

]]>But when I watched videos of eye-witness accounts, including some in front of the church where I tied my shoes and the corner where I nervously loitered, it gave me a vital bit of perspective: I happen to be alive, and there's no cosmic law entitling me to that status. Being alive is just happenstance, and not one more day of it is guaranteed.

This thought instantly relieved me of any angst over that particular day's troubles: technical issues on my website, an unexpected major expense, an acute sense that I'm getting old.

Those problems remained, and they are real problems. But they immediately became only relatively important. They lost their sense of absolute importance. In fact, any personal problem I could think of now seemed to be a small, aesthetic complaint about the grand, mysterious gift of being randomly, unfairly alive that day.

It's fairly cliché to say that we're all living on borrowed time, so it's no surprise how rarely we consider the truth in it (although David is clearly an exception). Many people don't bother at all unless they've experienced a life threatening situation—at least one that felt that way for a moment—or after a close personal loss. Life is fleeting, though, and tomorrow isn't promised. That's as true for ourselves as it is for the people in our lives. Our time to live is an incredible gift card with an unknown balance: we can spend it on whatever we want, but we never know when that balance will abruptly run out.

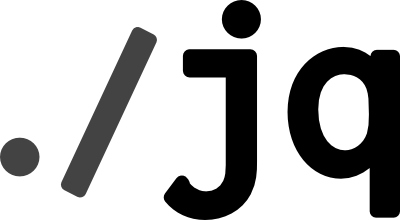

My father feeding me

Even as a child, I was acutely aware that not everyone grew old, and I expected to live a short life. My father died at the age of 25, when I was 5 months old. By the time I turned 21, I recognized that he had a wife and my older brother to support at that point in his life. I also realized that he would've been middle-aged if he'd lived, which felt strange because I'd never imagined him older than he was in photos—much less with grey in his hair or creases by his eyes. When I turned 26, I couldn't help but feel mixed emotions as I appreciated the opportunity of another year while remaining conscious that not everyone gets that.

I was 32 when my 37-year-old brother died from a heart attack. Was it tragic to only get half a life or a blessing to live 50% longer than our father? Whether you see the glass as half empty or half full, the simple truth is that none of it was guaranteed to begin with. Most people expect to outlive their parents, but losing a sibling can catch you off-guard when you're still naive enough to consider yourselves to be young. From that day forward, I would be conscious of the narrowing gap between my own age and the arbitrary number of years he was granted. Then on my 38th birthday, I became older than my older brother. That's not the kind of achievement that makes one proud like the first time you beat him 2 out of 3 games of checkers or realizing that what might've been a lucky streak was actually the beginning of always being able to outrun your older brother.

I'm 41 now, which is a number that reeks of middle age at best. It's also 4 years older than my brother and an incredible 16 years older than my father. When I think about living on borrowed time, those continually increasing gaps are what come to mind. Those years could've belonged to someone else—someone younger, more deserving, or more grateful—but I was the one who lived them. Every passing year and the experiences they deliver feel like one-sided gifts from the universe that I'll never be able to reciprocate. Last month, I watched my brother's oldest daughter walk down the aisle. He undoubtedly deserved that experience more than I did. Yet while I was there, he was 8 years away. People love to talk about how unfair life is, but we rarely bring it up when we're the ones getting the lucky breaks. What would a loved one have done with that time if it had been given to them instead of us?

When we borrow money, we typically repay it with interest. Life doesn't demand anything in return, though. The default option is to squander our years reaping life's rewards without ever giving back. While life hasn't always been easy, I'm not blind to the fact that I've been extremely fortunate. I was born mostly healthy to a supportive family in a first world country. I was given educational and employment opportunities that enabled me to work my way into a career that more than provides for my family. There's no way I could repay the world in full, but I've been considering how I could start paying some interest on my debt to the universe.

What I'm discovering is that it's easy to let life race by and to marvel at how fast it goes, but it's much more difficult to live with intent. Finding the "right way" to contribute or the most fitting course of action to make an impact isn't a simple task. I'm reminded of the Voltaire quote: "the perfect is the enemy of the good." People often avoid doing things due to a desire for perfection when it's far more beneficial to just get started. Beginning is no small feat. I often stack obstacles in front of my dreams to keep them at arm's length: "I'll start on X as soon as I finish Y and Z." Of course, with Y and Z always changing, I may never get around to X at all. That's not how I want this to play out, so I need to be more deliberate in my search and my choices.

You won't be remembered for the good intentions that you never acted on. No one will speak at your funeral about the things you would have done if given a few more years. Your grandchildren won't hear stories about the person you wanted to be. Few of us could ever truly repay our debt to the universe, but we can all afford to pay some interest on it. If you're wondering when to start, it's clearly now since you never know when "later" will be removed as an option.

As for me, I want to stop allowing indecision be an obstacle and accept that no contribution is too small. I'll give blood more often because I hope it saves a stranger's life one day. I'll contribute to open source projects because those projects have a positive impact on others. I'll help people where I can and I'll look for more opportunities to do so. If posts like this inspire others to do the same, then I'll write more of them. Maybe one day I'll find myself with the time and influence to have a larger impact, but these are all things I'm able to do right now without waiting for some perfect time in a future that isn't promised.

]]>The AWS Summit has several concurrent tracks, so the hard part is deciding where you most want to be at a given time. To make that easier, download AWS Americas Summits for Android and start creating your schedule a day or two in advance. Be careful NOT to download the other app that claims to be official, because it didn't have the correct event details when I tried it. Once you have the list of events, note that there are 30-minute introductory presentations as well as 1-2 hour workshops that go into more detail. I added the most tempting sessions to my schedule in the app even when there were conflicts, which made it easier to switch to a fallback when a session was full or for any other reason. Besides the app, be sure to bring a laptop if you intend to participate in workshops. They had free Wi-Fi, of course, but I prefer to tether my laptop to my cell phone. Finally, bring a snack or two so you don't have to waste time hunting down vending machines.

I started out by sitting in on 3 half-hour lecture style presentations. It could have easily been different for other sessions, but these presentations were too high level for me. To be fair, I had trouble hearing the presenter for "Cloud-Based Application Deployment with AWS CloudFormation," but it seemed like anyone with a bit of CloudFormation research under their belt could skip it. "Getting Started with IoT on AWS" had a little more depth, but it still only scratched the surface of IoT Core. Finally, "AWS Lambda: Best Practices and Common Mistakes" gave a few tips that may help the beginner but not someone who's actively using Lambda functions. I don't want to sound overly critical of these sessions: given that they're only 30 minutes long, don't expect them to be any more than introductions to help determine whether a service you're unfamiliar with is worth a deeper dive when you get home. With some previous research into both CloudFormation and IoT Core as well as several months of Lambda experience, I did still take a few notes.

Next, I joined the "Design and Implement a Serverless Media-Processing Workflow" lab that used Step Functions to extract metadata from images, save the data to DynamoDB, and generate thumbnails of the images. One great thing about the lab setup was that they gave us a URL and redemption code at the beginning giving us access to a preconfigured AWS account to work in. The instructor said it's a new approach for them, and I'm sure it sped things up tremendously to skip helping people set up IAM roles and adding credits to everyone's accounts. While I expected to get into the details of media processing during this workshop, even 2 hours wasn't enough time for that. The real focus was on how Step Functions work and configuring their pre-written Lambda functions to execute as Step Functions. Since I'm familiar with Lambda but had never used Step Functions, that was great for me. It was helpful to walk through the setup to see how to implement a basic sequence flow. For anyone interested in trying it for themselves, the entire image processing workshop is available on GitHub.

Last but not least, I made it to the "AWS DeepLens Workshop: Building Computer Vision Applications" session that was so popular it was held 3 times during the day. If you want to participate in this one, show up a half hour early or the room will fill up. My concern going in was that I'd have to plug a DeepLens into my laptop to participate, but it's a Chromebook that isn't set up for development—just to remote my home workstation. They had it covered, though: everyone worked from the DeepLens itself, which comes with Ubuntu installed. It was connected to a monitor via Micro HDMI, with a USB keyboard/mouse, and logged into an AWS account with the IAM roles preconfigured. The lab concept was very cool: we deployed a machine learning model that recognizes faces in the device's live video stream, crops them out, and uploads every face as a photo to S3. Each new image triggers a Lambda function that infers an emotion from the face and stores the result in DynamoDB. Finally, we created a CloudWatch dashboard to monitor the incoming data stream and chart the number of times each emotion had been recognized in the room. As in the previous workshop, we focused on how to push ML models and a Lambda function to the device rather than the actual code (but did skim through some at the end). Of course, with the DeepLens workshop available on GitHub, you can go home and dig through the code in as much depth as you want.

I'm not a collector of cheap swag, so I didn't even browse to see what vendors were giving away (though there appeared to be plenty available if that's your jam). I was there specifically to level-up my AWS game. If you're entirely new to AWS, it could be beneficial to attend a variety of short sessions to get an overview of many different services. For those with some prior exposure to AWS, the workshops are definitely where it's at. Especially if you're a hands-on person, I recommend pursuing a small number of in-depth workshops over many brief presentations. The few notes I have from the presentations may prove useful, but we'll almost certainly leverage Step Functions at work soon and I've been brainstorming ways to put DeepLens and its underlying tech to use. If you have the chance to attend an AWS Summit nearby, go for it. Immersing yourself in that environment for a day could be just what you need to get your creative juices flowing.

]]>There's one core feature for a logger: write text to a target location in a predictable format while ignoring messages deemed unimportant in a given environment. Thus, the common variants for logger instances are:

Sitka allows configuration of log level and format at both a global and instance level. You can set each using environment properties or the constructor. In production environments, many high traffic sites only log exceptions, errors, and warnings by default. The ability to increase the log level for a specific logger instance at runtime means you can zero in on the component having trouble without generating gigs of log data. To change the log destination, you can simply redirect stdout/stderr or provide a custom function that sends the data to any store using the transport of your choosing. Since each of these common variants are configurable, Sitka really should work for almost everyone. For details and code examples, refer to the Sitka documentation on GitHub.

When you're debugging an application, what makes a log message useful? Think about why stack traces are so helpful: it's due to the context they include from the time of the exception. Productive log messages expose context that provides insight into the point-in-time state of the execution environment. Sitka makes it easier to surface those details by including special variables in the log format and/or individual messages. For example, an environment variable in the log format can reveal which container (or server if that's the life you're living) is seeing frequent errors so you can remove it from the cluster.

Besides environment variables, you can also set custom context variables in code (globally and at the instance level) to make them available in log messages. This level of control can go to whatever depth you desire, which makes it easier to write consistently useful log messages. For example, if your User class has an ID property and you want to know what account encountered any given issue, you could set the ID in the logger's context and include it in the format for that logger instance. Then every log entry from your User class would include the ID without explicitly adding it to the log messages. The Sitka documentation on GitHub includes an advanced example showing how to leverage this functionality in an AWS Lambda environment.

Logs come from trees, and the Sitka spruce is known for its high strength relative to its light weight. Its wood was commonly used to build early airplanes including the Wright brothers' first successful heavier-than-air powered aircraft and is still used to build many string instruments and other products. Sitka aims to remain a lightweight but powerful logger that feels at home in the cloud.

Let's face it: not many people get excited about a logger. Frankly, I'm surprised you read this whole post. You should try Sitka in your next project and let me know what you think in the comments below or using my contact page.

]]>Giving it a try, the obstacle is that aliases always include the space between the alias and its parameters. As usual, Stack Exchange provided the right direction. With some tweaking, I was able to add this alias to ~/.bashrc:

alias hints='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /-/g"); lynx -accept_all_cookies https://devhints.io/"$URL_PARAM"; unset -f tmp_f; }; tmp_f'After reloading the file with source ~/.bashrc, you can simply type hints vim to search for vim on Devhints in Lynx (replace vim with any topic, of course). That reminded me of the Quick Search feature in Firefox, so I decided to create a few more aliases to mirror what I use in Firefox and see how well the same sites work as pseudo command line tools:

alias abbr='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /+/g"); lynx -accept_all_cookies https://www.acronymfinder.com/"$URL_PARAM".html; unset -f tmp_f; }; tmp_f'

alias dict='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /+/g"); lynx -accept_all_cookies http://www.dictionary.com/browse/"$URL_PARAM"; unset -f tmp_f; }; tmp_f'

alias ddg='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /+/g"); lynx -accept_all_cookies https://duckduckgo.com/lite/?q="$URL_PARAM"; unset -f tmp_f; }; tmp_f'

alias duck='ddg'

alias ports='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /+/g"); lynx -accept_all_cookies https://www.freshports.org/search.php?query="$URL_PARAM"; unset -f tmp_f; }; tmp_f'

alias wiki='tmp_f(){ URL_PARAM=$(echo "$@" | sed "s/ /+/g"); lynx -accept_all_cookies https://en.wikipedia.org/w/index.php?search="$URL_PARAM"; unset -f tmp_f; }; tmp_f'It's no surprise that these sites all look good in Lynx. Now the question is how often I'll remember to use these new aliases.

]]>I'm definitely not a shell scripting guru, but I thought these libraries could be useful for others as well. I leveraged them to create scripts that perform the full setup and configuration of my host and jail including the database and web server. I've released the libraries as part of a freebsd-scripts project on GitHub along with a couple of simple scripts to back up Apache and the system configs. I also added the portsfetch script, which I use to fetch the quarterly ports tree. I'm sure I'll share more shell scripts over time, so this gives me a single project to group them under. If you use them to create something useful, tell me about it in the comments below.

]]>